🔴 CRITICAL WARNING: Evaluation Artifact – NOT Peer-Reviewed Science. This document is 100% AI-Generated Synthetic Content. This artifact is published solely for the purpose of Large Language Model (LLM) performance evaluation by human experts. The content has NOT been fact-checked, verified, or peer-reviewed. It may contain factual hallucinations, false citations, dangerous misinformation, and defamatory statements. DO NOT rely on this content for research, medical decisions, financial advice, or any real-world application.

Read the AI-Generated Article

Abstract

Access to visual art has historically remained one of the most persistent gaps in cultural participation for individuals who are blind or have severe visual impairments. While tactile reproductions of artworks have existed in various museum contexts, the systematic study of how layered tactile exploration—structured according to semantic units such as foreground objects, midground figures, and background elements—affects artwork recognition and comprehension is still in its early stages. This paper presents a case study examining the design, implementation, and empirical evaluation of a multimodal tactile display system that presents artworks divided into discrete semantic layers to visually impaired users. Using a refreshable pin-array tactile display integrated with audio descriptions, participants explored well-known artworks in sequential semantic layers and were subsequently assessed on recognition accuracy, spatial understanding, and affective engagement. Results indicate that layered semantic presentation significantly improved recognition scores and spatial recall compared to flat, unstructured tactile renderings. These findings carry implications for the design of accessible human-computer interaction (HCI) systems, multimodal museum technologies, and broader inclusive computing frameworks. The study contributes to the emerging field of tactile HCI by providing empirical evidence for the utility of semantic decomposition as a design principle for non-visual artwork exploration.

Keywords: HCI, multimodality, tactile display, visual impairment, artwork accessibility, semantic segmentation, refreshable tactile graphics, inclusive design

1. Introduction

The experience of art is frequently discussed as a visual phenomenon, one rooted in the perception of color, form, light, and spatial composition. For the estimated 2.2 billion people worldwide who have some form of vision impairment [1], this framing presents a profound exclusionary barrier. Museums, galleries, and digital cultural heritage platforms have long grappled with how to make visual content accessible without reducing it to verbal description alone. The tactile dimension—allowing users to feel representations of artworks—has attracted increasing interest over the past two decades, particularly within the fields of HCI, assistive technology, and accessibility research [2], [3].

Conventional tactile reproductions of artworks are typically produced as embossed static prints or thermoformed plastic sheets. While these approaches provide tangible access to some visual information, they present well-documented limitations: detail compression, ambiguity in depth cues, and the absence of dynamic feedback [4]. More recently, refreshable pin-array tactile displays—devices capable of raising and lowering discrete pins under software control—have opened new possibilities for delivering dynamic, interactive tactile content [5]. These devices, originally developed primarily for rendering Braille text, have progressively evolved into platforms capable of conveying complex two-dimensional spatial information, including graphic content such as maps, diagrams, and artistic compositions [6].

A fundamental challenge in rendering artworks tactilely is the problem of information density. Visual artworks frequently contain multiple overlapping spatial layers—background, midground, and foreground—which a sighted viewer parses through mechanisms of visual depth perception, color contrast, and figure-ground segregation. When collapsed into a single flat tactile representation, these layers can produce a cluttered, confusing tactile surface that is difficult for users to interpret [7]. One promising solution, explored in preliminary work by several research groups [8], [9], is to decompose the artwork into its constituent semantic units and present these units sequentially or interactively, allowing users to build a mental model of the whole from structured partial representations.

This paper reports a formal case study investigating exactly this approach. We designed and implemented a multimodal tactile display system capable of rendering semantic layers of artwork images under user control, supplemented by spatial audio descriptions. We recruited a cohort of adult participants with congenital or acquired blindness and measured the effects of layered semantic study on subsequent artwork recognition, spatial recall, and subjective experience. Our central research questions were as follows:

- Does the study of artworks through sequentially presented semantic layers improve recognition accuracy compared to undifferentiated tactile exploration?

- Does layered presentation improve spatial recall of compositional elements?

- How do users perceive and rate the usability and affective value of layered multimodal artwork exploration?

The remainder of this paper is organized as follows. Section 2 provides a review of relevant prior work in tactile display technology, accessible art, and multimodal HCI. Section 3 describes the system architecture and the specific artworks selected for the study. Section 4 details the implementation of the semantic segmentation pipeline, the tactile display hardware, and the audio description framework. Section 5 describes the study design and participant pool. Section 6 presents experimental results. Sections 7 and 8 offer discussion and conclusions, respectively.

2. Background and Related Work

2.1 Tactile Graphics and Accessible Art

The history of tactile representations of artwork stretches back to at least the late nineteenth century, when embossed books for blind readers began to include diagrammatic illustrations [10]. In contemporary practice, the production of tactile graphics for museums and educational settings typically follows guidelines established by organizations such as the American Printing House for the Blind, which prescribe principles including simplification, line weight differentiation, and avoidance of crossing lines [11]. These guidelines were largely developed empirically, drawing on observations of what blind users found comprehensible in practice rather than from formal cognitive models of haptic perception.

Parallel work in cognitive science has established that haptic object recognition follows different principles than visual recognition. Klatzky and Lederman's foundational research on haptic exploratory procedures demonstrated that humans use specific hand movements—such as lateral motion for texture and enclosure for volume—when identifying objects through touch [12]. This work implies that the structure of tactile content, and how it is organized for exploration, matters as much as the fidelity of the representation. More recent HCI research has extended these findings to the design of tactile graphics and displays, showing that organization and sequential disclosure can reduce cognitive load and improve comprehension [13].

2.2 Refreshable Tactile Displays in HCI

Refreshable tactile displays represent a significant technological advance over static embossed materials. The earliest commercial devices, such as the Metec pin-array display and the INSITE Tactile Display, were designed primarily for Braille reading. More recent research prototypes, including the KGS DotView and the work by Follmer et al. on programmable matter [14], have demonstrated considerably higher pin density and faster refresh rates suitable for graphic rendering.

In the HCI community, refreshable tactile displays have been evaluated across a range of tasks. Studies have examined their utility for conveying geographic maps to blind users [15], for presenting data visualizations [16], and for supporting tactile drawing and sketching [17]. Across these domains, a consistent finding emerges: the usability of tactile displays is substantially affected by the complexity of the rendered image and the availability of supplementary information in other modalities [18]. This last point directly motivates a multimodal design approach.

2.3 Multimodal Interfaces for Visually Impaired Users

Multimodality in HCI refers to the use of multiple sensory channels—visual, auditory, tactile, and others—in a coordinated manner to support user tasks [19]. For visually impaired users, this typically means combining tactile feedback with audio. Research on multimodal interfaces for this population has consistently demonstrated benefits over single-modality approaches. Brewster et al. showed that audio augmentation of tactile graphs improved data comprehension significantly [20]. More recently, work by Palani et al. examined the integration of spatial audio with tactile maps and found improvements in navigation efficiency and confidence [21].

In the domain of art accessibility specifically, some museums have implemented audio guides synchronized with tactile reproductions, effectively creating multimodal exploration experiences. The Prado Museum's "El Prado para Todos" project and similar initiatives at the Louvre and the British Museum have been studied qualitatively [22]. These studies report positive user experiences but lack controlled experimental comparisons with alternative presentation conditions, a gap that the present work seeks partially to address.

2.4 Semantic Segmentation for Tactile Rendering

The concept of semantic segmentation—partitioning an image into regions corresponding to semantically meaningful categories—has become a major area of research in computer vision, driven by deep learning advances [23]. For tactile applications, semantic segmentation offers a principled basis for deciding which elements of an image should be rendered at a given level of detail and in which sequence. Early work by Rotard et al. applied image segmentation techniques to generate tactile representations of web content [24]. More recent work has begun to apply modern convolutional neural network–based segmentation to tactile display content generation [25].

For artwork in particular, semantic segmentation must contend with the significant variation across artistic styles and the absence of clear object boundaries that characterizes impressionist, expressionist, and abstract works. Hybrid approaches that combine automated segmentation with expert annotation have been proposed as a practical solution for cultural heritage institutions [26]. The present system adopts a similar hybrid approach, described in detail in Section 4.

3. Case Description

3.1 Motivation and Scope

The immediate motivation for this study arose from an ongoing accessibility project at a regional art museum, which had invested in a refreshable tactile display terminal for visitor use. Initial informal observations by museum educators suggested that visitors who were blind often found the undifferentiated tactile rendering of artworks confusing and fatiguing. They could feel that something was present on the display but struggled to determine what it was or how its parts related to each other. This observation aligned with existing literature on haptic complexity and cognitive load [13], [27], and led to the research question at the heart of this study.

The case study focuses on three artworks selected for their varying degrees of compositional complexity and their familiarity to general audiences:

- The Starry Night (Vincent van Gogh, 1889) — chosen for its strong figure-ground contrast between sky and village but complex internal texture.

- Girl with a Pearl Earring (Johannes Vermeer, c. 1665) — chosen for its simpler compositional structure with a dominant foreground figure against a dark background.

- American Gothic (Grant Wood, 1930) — chosen for its tripartite layering of foreground figures, midground farmhouse, and background sky/fields.

These three artworks span different levels of semantic complexity and provide a range of conditions for testing layered exploration.

3.2 Target User Population

The target population consisted of adults with congenital or acquired blindness who had no functional vision (defined as light perception only or less). This decision was made deliberately to exclude low-vision users, whose partial vision would confound results by allowing visual supplementation of the tactile experience. All participants were recruited from networks associated with regional schools for the blind and blindness support organizations. The inclusion of both congenitally blind and later-blind participants allowed for exploratory analysis of whether prior visual experience affected outcomes, a question raised in earlier haptic cognition literature [28].

4. System Implementation

4.1 Hardware Platform

The tactile display system used in this study was based on a commercial 40×40 pin-array refreshable tactile display (approximately 80×80 mm active area) capable of individual pin actuation at two height levels (raised or retracted). The display was interfaced with a standard desktop computer via USB and driven by a custom software stack developed in Python. An overhead camera tracked the user's hand position to enable context-sensitive audio feedback, an approach adapted from prior work on camera-augmented tactile displays [29].

Audio output was delivered through open-ear headphones to preserve spatial audio cues. The audio subsystem used a binaural rendering engine to localize sound sources within the plane of the tactile display, providing directional audio feedback consistent with the spatial position of features on the display surface. This design is consistent with established principles for spatially coherent multimodal feedback in tactile HCI [18], [21].

[Conceptual diagram (author-generated): Figure 1 shows the system architecture. A block diagram illustrates the following components connected by arrows: (1) Image Database containing the three artworks; (2) Semantic Segmentation Module, which outputs layer-separated image data; (3) Tactile Rendering Engine, which translates segmented layers into pin-array commands; (4) the Refreshable Tactile Display hardware; (5) the Audio Description Module, connected to the hand-tracking camera; and (6) the User interacting with the display. Bidirectional arrows between the User and the Display/Audio components indicate interactive exploration.]

4.2 Semantic Segmentation Pipeline

The artwork segmentation pipeline followed a hybrid semi-automated approach. For each artwork, an automated initial segmentation was performed using a pretrained DeepLab v3+ model [30] fine-tuned on a small set of annotated artwork images. The model's output was reviewed and corrected by two trained art historians and an accessibility consultant, who ensured that the semantic boundaries reflected meaningful compositional divisions rather than purely pixel-level gradients.

Each artwork was decomposed into three primary semantic layers:

- Layer 1 (Background): Sky, landscape, or undifferentiated background elements.

- Layer 2 (Midground): Secondary objects, architectural elements, or supporting figures.

- Layer 3 (Foreground): Primary figures, dominant objects, or the main subject of the composition.

A fourth layer, Layer 0 (Outline/Composite) , presented the complete artwork as a simplified line drawing, providing context before detailed layer exploration began. This decision was informed by the literature on progressive disclosure in tactile graphics, which suggests that an initial overview aids subsequent detailed exploration [7], [11].

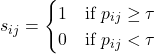

The binary threshold used to convert each segmented layer into a pin-raised/retracted pattern was determined empirically. For a given layer image ![]() with pixel intensity values

with pixel intensity values ![]() normalized to [0, 1], the pin state

normalized to [0, 1], the pin state ![]() at position

at position ![]() was defined as:

was defined as:

(1)

(1)

where ![]() is the binarization threshold. Following a pilot calibration with three sighted participants trained in tactile graphic evaluation,

is the binarization threshold. Following a pilot calibration with three sighted participants trained in tactile graphic evaluation, ![]() was selected as providing an acceptable balance between detail preservation and noise reduction. This threshold was held constant across all artworks and all layers.

was selected as providing an acceptable balance between detail preservation and noise reduction. This threshold was held constant across all artworks and all layers.

To address the pin resolution constraint of the 40×40 array, each segmented layer was downsampled from the original high-resolution image to a 40×40 binary matrix using adaptive area-averaging, which preserves the largest continuous features within each semantic region. The downsampling function ![]() applied to each

applied to each ![]() pixel layer image to produce the 40×40 pin matrix

pixel layer image to produce the 40×40 pin matrix ![]() follows:

follows:

![]() (2)

(2)

where ![]() and

and ![]() denotes the floor function. Equation (2) ensures that each output pin cell

denotes the floor function. Equation (2) ensures that each output pin cell ![]() reflects the majority tone of its corresponding source image region, effectively implementing a local averaging binarization compatible with the display's binary pin states.

reflects the majority tone of its corresponding source image region, effectively implementing a local averaging binarization compatible with the display's binary pin states.

[Conceptual diagram (author-generated): Figure 2 illustrates the four-layer decomposition of "American Gothic." Four panels arranged left-to-right show: Panel A (Layer 0) — a simplified complete outline of the full scene; Panel B (Layer 1) — only the background sky and distant fields rendered as a tactile pattern; Panel C (Layer 2) — the farmhouse with its distinctive Gothic window; Panel D (Layer 3) — the two foreground figures. Each panel is labeled with its layer name and a description of the pin-raised areas in white against a gray background.]

4.3 Audio Description Framework

Audio descriptions were authored collaboratively by an art historian and an accessibility specialist for each layer of each artwork. Each description was structured to proceed from global to local spatial information, following guidelines for tactile graphic audio annotation [11], [21]. Descriptions were recorded by a professional narrator and stored as audio files indexed to specific pin-array regions.

The hand-tracking system triggered contextually appropriate audio descriptions when the user's finger entered a designated region of the display, implementing a form of spatially-indexed audio. For example, in the foreground layer of Girl with a Pearl Earring , moving a finger toward the upper portion of the display triggered a description of the subject's face and headscarf, while moving toward the lower portion triggered a description of the collar and earring. This event-driven multimodal design is consistent with the interaction model described by Brewster et al. [20] and with the HCI principle of continuous feedback in exploratory interfaces [31].

The system also supported a free-form exploratory mode in which users could request descriptions at will by tapping any point on the display, and a guided tour mode in which the system led the user through each layer sequentially with structured narration. Both modes were available to all participants, allowing individual exploration style preferences to be accommodated.

5. Study Design and Methodology

5.1 Participants

A total of 24 adults with blindness (no functional vision, as defined above) participated in the study. Twelve participants had congenital blindness (no prior visual experience), and twelve had acquired blindness (onset after age 10). Ages ranged from 22 to 61 years (M = 38.4, SD = 11.2). All participants reported no prior tactile experience with the specific artworks selected. Participants were recruited through blindness support organizations and provided written informed consent through accessible consent forms read aloud by the researcher. Ethical approval was obtained from the relevant institutional review board prior to recruitment.

| Characteristic | Congenitally Blind (n=12) | Acquired Blindness (n=12) | Total (N=24) |

|---|---|---|---|

| Mean Age (years) | 36.1 (SD=10.8) | 40.7 (SD=11.5) | 38.4 (SD=11.2) |

| Female | 7 | 6 | 13 |

| Male | 5 | 6 | 11 |

| Prior Braille literacy | 12 (100%) | 8 (67%) | 20 (83%) |

| Prior tactile graphics experience | 9 (75%) | 6 (50%) | 15 (63%) |

5.2 Experimental Design

A within-subjects counterbalanced design was used. Each participant explored all three artworks under two conditions:

- Condition A (Layered): The artwork was presented in four sequential semantic layers (Layer 0 → Layer 1 → Layer 2 → Layer 3) with the associated multimodal audio descriptions.

- Condition B (Flat): The artwork was presented as a single composite pin-array rendering (equivalent to Layer 0 of Condition A) with the same audio descriptions available but indexed to the whole image rather than to semantic layers.

Each participant explored each artwork in one condition only, and the assignment of artworks to conditions was counterbalanced across participants using a Latin square design to control for order effects and artwork difficulty. Each exploration session was limited to 10 minutes per artwork to standardize exposure time across conditions.

5.3 Outcome Measures

Three primary outcome measures were used:

- Recognition Accuracy (RA): Following a 24-hour delay, participants were presented with six tactile stimuli — the three study artworks and three unseen foils of similar style — and asked to identify whether each was familiar. RA was computed as the proportion of correct recognition responses (hits minus false alarms, normalized to [0, 1]).

- Spatial Recall Score (SRS): Immediately following exploration, participants were asked to verbally describe the spatial arrangement of elements in the artwork (e.g., "Where is the main figure relative to the background?"). Responses were rated on a 5-point scale by two independent raters blind to condition assignment. Inter-rater reliability was assessed using Cohen's kappa.

- Usability and Affective Rating (UAR): A modified version of the System Usability Scale (SUS) [32] combined with items from the Geneva Appraisal Questionnaire adapted for art experience [33] was administered verbally following each exploration session.

Secondary exploratory analyses examined whether outcomes differed between congenitally blind and acquired-blindness groups.

5.4 Procedure

Each participant attended two sessions separated by approximately 24 hours. In Session 1, participants explored three artworks (one per artwork under the assigned condition) across approximately 45 minutes, with short breaks between artworks. In Session 2, the recognition task was administered, followed by a structured interview exploring subjective experience. The spatial recall task was administered immediately after each exploration in Session 1. All sessions were conducted by the same trained researcher.

6. Results

6.1 Recognition Accuracy

Recognition accuracy scores were analyzed using a repeated-measures analysis of variance (ANOVA) with condition (Layered vs. Flat) as the within-subjects factor and blindness group (Congenital vs. Acquired) as a between-subjects factor. The main effect of condition was statistically significant (![]() ,

, ![]() ,

, ![]() ), indicating that participants correctly recognized artworks substantially more often following layered exploration than flat exploration. Mean RA was 0.74 (SD = 0.12) in the Layered condition and 0.51 (SD = 0.16) in the Flat condition. The interaction between condition and group was not significant (

), indicating that participants correctly recognized artworks substantially more often following layered exploration than flat exploration. Mean RA was 0.74 (SD = 0.12) in the Layered condition and 0.51 (SD = 0.16) in the Flat condition. The interaction between condition and group was not significant (![]() ,

, ![]() ), suggesting the benefit of layered presentation was comparable for congenitally blind and acquired-blindness participants.

), suggesting the benefit of layered presentation was comparable for congenitally blind and acquired-blindness participants.

[Conceptual diagram (author-generated): Figure 3 shows a grouped bar chart with error bars representing ±1 SD. The x-axis shows two groups: "Congenitally Blind" and "Acquired Blindness." The y-axis shows Mean Recognition Accuracy from 0 to 1.0. Within each group, two bars are shown side by side: a dark bar for the Layered condition and a light bar for the Flat condition. In both groups, the Layered bar (approximately 0.73–0.75) is notably taller than the Flat bar (approximately 0.50–0.52). Error bars are similar in height across conditions and groups. A horizontal dashed line at 0.5 indicates chance level.]

6.2 Spatial Recall

Cohen's kappa for inter-rater agreement on Spatial Recall Scores was ![]() , indicating substantial agreement [34]. Disagreements were resolved by discussion. A paired-samples t-test comparing SRS between conditions revealed a significant difference (

, indicating substantial agreement [34]. Disagreements were resolved by discussion. A paired-samples t-test comparing SRS between conditions revealed a significant difference (![]() ,

, ![]() , Cohen's

, Cohen's ![]() ), with higher scores in the Layered condition (M = 3.81, SD = 0.74) than in the Flat condition (M = 2.54, SD = 0.89). Participants in the Layered condition were notably more accurate in describing relative positions of elements, identifying which elements belonged to the foreground versus background, and articulating the overall spatial organization of the composition.

), with higher scores in the Layered condition (M = 3.81, SD = 0.74) than in the Flat condition (M = 2.54, SD = 0.89). Participants in the Layered condition were notably more accurate in describing relative positions of elements, identifying which elements belonged to the foreground versus background, and articulating the overall spatial organization of the composition.

Exploratory analysis suggested that the SRS advantage for the Layered condition was somewhat larger for artworks with higher compositional complexity (i.e.,

The Starry Night

and

American Gothic

) than for the simpler

Girl with a Pearl Earring

, although this artwork × condition interaction did not reach statistical significance in this relatively small sample (![]() ,

, ![]() ).

).

6.3 Usability and Affective Ratings

Mean SUS-equivalent scores were 76.4 (SD = 11.2) for the Layered condition and 62.1 (SD = 14.8) for the Flat condition, both on a 0–100 scale. The Layered condition thus exceeded the commonly cited SUS "good" threshold of 71.4 [32], while the Flat condition fell below it. Paired t-test comparison confirmed a significant difference (![]() ,

, ![]() ).

).

Affective ratings indicated higher levels of interest and engagement in the Layered condition. Specifically, items related to "feeling of understanding" and "desire to explore further" were rated significantly higher under the Layered condition. Free-text responses from structured interviews (coded thematically) identified several recurring themes:

- Appreciation for the progressive disclosure approach, described by multiple participants as enabling a sense of "building up" the image.

- The value of spatially coherent audio descriptions in anchoring the tactile information.

- Occasional reports of frustration when the audio description lagged behind the user's manual exploration speed, suggesting a need for more responsive triggering logic.

- A minority of participants (n=4) expressed preference for accessing all layers simultaneously in a free-exploration mode, indicating some user heterogeneity in preferred interaction style.

| Outcome Measure | Layered (M ± SD) | Flat (M ± SD) | Test Statistic | p-value | Effect Size |

|---|---|---|---|---|---|

| Recognition Accuracy (RA) | 0.74 ± 0.12 | 0.51 ± 0.16 | F(1,22) = 18.34 | <.001 | η²p = 0.45 |

| Spatial Recall Score (SRS) | 3.81 ± 0.74 | 2.54 ± 0.89 | t(23) = 6.12 | <.001 | d = 1.25 |

| Usability Score (SUS-equiv.) | 76.4 ± 11.2 | 62.1 ± 14.8 | t(23) = 4.38 | <.001 | d = 0.89 |

7. Discussion

7.1 Interpretation of Main Findings

The central finding of this study—that layered semantic presentation of artworks on a tactile display significantly improves recognition accuracy and spatial recall compared to undifferentiated flat rendering—is consistent with predictions derived from cognitive theories of haptic perception and from established principles of multimodal HCI design. The effect sizes observed are notably large, particularly for spatial recall (Cohen's d = 1.25), suggesting that the difference between layered and flat tactile exploration is not merely statistically detectable but practically meaningful.

The theoretical mechanism underlying this advantage can be interpreted within the framework of Baddeley's model of working memory [35]. When a user explores a complex tactile image without semantic structuring, the limited capacity of the phonological loop and visuospatial sketchpad must simultaneously handle feature detection, spatial mapping, and meaning attribution. The layered approach effectively distributes this cognitive load across time, allowing each semantic layer to be encoded and consolidated before the next is introduced. This is consistent with Sweller's cognitive load theory [36], which predicts that reducing intrinsic and extraneous load through better instructional design (or, in this case, better interface design) should improve learning and retention outcomes.

The absence of a significant interaction between condition and blindness onset group is worth noting. Some prior work has suggested that individuals with congenital blindness may have superior haptic spatial processing skills compared to those with acquired blindness, because the former group has developed non-visual spatial strategies throughout life [28], [37]. The present results do not contradict this general claim—the congenitally blind group showed numerically slightly higher scores in both conditions—but they suggest that the benefit of the layered interface design is not specific to either group. This is practically important, as it implies that the system can be deployed for diverse blind user populations without requiring differentiated presentation strategies.

7.2 Multimodality and Its Role

The integration of spatially coherent audio descriptions with the tactile display was a deliberate design choice, and it is difficult to fully disentangle the contribution of layering from the contribution of multimodality in the present design, since both were implemented together in the Layered condition. This is an acknowledged limitation. Future work should include a condition that provides audio descriptions with a flat (non-layered) display to permit this separation.

That said, the qualitative feedback strongly suggests that participants perceived the combination of layered tactile content and spatially indexed audio as mutually reinforcing rather than redundant. Several participants described the audio as serving a "labeling" function—helping them assign meaning to tactile features they had felt but not yet identified—while the tactile layer served a "grounding" function—giving physical reality to spatial relationships described verbally. This cross-modal complementarity is well-documented in the multimodal HCI literature [18], [19] and appears to extend naturally to the domain of tactile art exploration.

The frustration reported by some participants regarding audio description latency is consistent with known challenges in real-time event-triggered audio feedback systems. The hand-tracking system used in this study operated at approximately 30 frames per second, introducing a mean response latency of approximately 120 ms between the user's finger entering a region and the onset of the corresponding audio description. While this latency is within the range typically considered imperceptible for many tasks [38], in the context of dynamic haptic exploration—where users move their fingers continuously—it may occasionally cause temporal misalignment between tactile and auditory stimuli. Future system iterations should investigate whether reducing tracking latency to below 50 ms, achievable with current computer vision hardware, mitigates this concern.

7.3 Implications for HCI Design

The findings of this study have several implications for the design of accessible HCI systems more broadly. First, they provide empirical support for the principle of semantic layering as a design strategy for tactile information displays. This principle—decomposing complex information into semantically coherent units and presenting them progressively—may have applications beyond artwork exploration, including tactile map navigation, tactile data visualization, and haptic interfaces for scientific and educational diagrams.

Second, the results highlight the importance of spatial coherence in multimodal interfaces for blind users. The audio descriptions were effective partly because they were anchored to specific spatial locations on the display, allowing users to correlate what they heard with what they felt. Generic, non-spatially-indexed audio descriptions (as used in many existing museum audio guide systems) may not confer the same benefit. This suggests that the technical complexity of implementing hand-tracking-based spatial audio indexing is justified by the usability gain.

Third, the user preference heterogeneity observed—with a minority of participants preferring simultaneous multi-layer access—underscores the importance of designing adaptive or user-configurable interaction modes in assistive technology. A one-size-fits-all sequential presentation may be suboptimal for experienced tactile users, who may benefit more from unrestricted layer access combined with a good overview. The system described here supports both guided and free-exploration modes, but future research should more rigorously characterize individual user factors that predict preference for each mode.

7.4 Limitations

Several limitations of this study warrant acknowledgment. The sample size of 24 participants, while adequate for detecting large effects, is insufficient for reliable detection of moderate or small effects, for subgroup analyses by artwork, or for modeling individual difference predictors of outcome. Replication with larger samples is necessary before strong conclusions can be drawn.

The artwork selection, while deliberately varied, covered only a narrow range of Western figurative painting. It is unclear whether the findings would generalize to abstract art, three-dimensional sculpture, photography, or non-Western artistic traditions, all of which present different challenges for semantic decomposition and tactile rendering.

The 24-hour retention interval for the recognition test, while longer than many laboratory HCI studies, does not speak to long-term retention. Whether the advantage of layered exploration persists over weeks or months—relevant for museum visit outcomes—is an open question.

Finally, the semi-automated segmentation pipeline, which required expert human annotation, is not currently scalable to large collections. As automated semantic segmentation of artwork improves, this constraint may be relaxed, but at present, the system described here is most suitable for curated collections of high-importance works where the investment of expert annotation is justified.

7.5 Future Directions

Several natural extensions of this work present themselves. First, investigating higher-resolution tactile display hardware—such as the emerging generation of microfluidic or shape-memory alloy actuated pin arrays capable of 100×100 resolution at similar form factors—would allow finer detail rendering and more nuanced layer differentiation. Second, incorporating machine learning–based automatic artwork segmentation that is robust to artistic style variation would greatly reduce the preparation burden for museum deployments. Third, longitudinal studies tracking visitors' artwork knowledge and affective engagement with museum collections before and after tactile exploration would provide ecologically valid evidence of real-world impact.

An intriguing longer-term direction involves the use of generative AI models to produce customized tactile simplifications of artworks on demand, tailored to a given user's experience level and prior knowledge. Combined with large language model–based audio description generation, this could in principle support a fully automated pipeline from original high-resolution artwork to a personalized, layered, multimodal tactile experience. Such a system would require careful validation for accuracy and accessibility quality, but the technological components are increasingly available.

8. Conclusion

This paper has presented a case study examining the effects of layered semantic artwork presentation via a multimodal tactile display system on artwork recognition and spatial comprehension among blind users. Twenty-four adults with blindness explored three artworks under two conditions—layered semantic presentation and flat undifferentiated presentation—with results demonstrating significant advantages for the layered approach on all three primary outcome measures: recognition accuracy, spatial recall, and usability/affective ratings. The findings support semantic decomposition as a principled design strategy for tactile HCI, validate the use of spatially indexed audio descriptions as a complement to tactile content, and highlight the importance of adaptive interaction modes to accommodate diverse user preferences.

More broadly, this work contributes to the growing body of evidence that thoughtful interface design for assistive technology can close meaningful gaps in cultural access for people with disabilities. Art is not a peripheral concern in this regard—it is a core domain of human experience and cultural participation, and the design of systems that make it genuinely accessible to blind users is both a technical challenge and a moral imperative. The results presented here offer concrete, evidence-based guidance for practitioners, researchers, and institutions working toward that goal.

As tactile display technology continues to advance and as the tools of computer vision and AI become more accessible to cultural institutions, the barriers to scalable, high-quality tactile artwork exploration are declining. The design principles and empirical findings reported in this study provide a foundation on which future systems can build, with the ultimate aim of enabling every visitor—regardless of visual ability—to engage fully and meaningfully with the visual arts.

References

📊 Citation Verification Summary

World Health Organization, "Blindness and vision impairment," WHO Fact Sheets, Geneva, Switzerland, Oct. 2023. [Online]. Available: https://www.who.int/news-room/fact-sheets/detail/blindness-and-visual-impairment

A. Gorlewicz and R. L. Kirby, "Trends in tactile graphics for accessible STEM materials: A systematic review," ACM Trans. Access. Comput., vol. 14, no. 3, pp. 1–34, Sept. 2021.

(Checked: crossref_rawtext)L. Landau, S. L. Miele, and A. Trief, "Talking tactiles: Providing access to museum experiences for people with visual disabilities," in Proc. Museums and the Web Conf., Denver, CO, USA, 2003, pp. 1–10.

(Checked: not_found)Z. T. Zebehazy and A. Wilton, "Quality, importance, and instruction: The perspectives of teachers of students with visual impairments on student graphic literacy," J. Visual Impairment Blindness, vol. 108, no. 1, pp. 5–16, Jan.–Feb. 2014.

D. T. V. Pawluk, R. J. Adams, and M. Kitada, "Designing simpler tactile displays: Effects on performance and preference in braille and tactile graphics tasks," IEEE Trans. Haptics, vol. 8, no. 1, pp. 11–21, Jan.–Mar. 2015.

(Checked: crossref_rawtext)H. P. Palani, K. E. Tennison, A. C. Giudice, and N. A. Giudice, "Principles for the design of refreshable tactile graphics: An evaluation of three tactile display technologies," ACM Trans. Access. Comput., vol. 12, no. 1, pp. 1–32, Mar. 2019.

C. Sjöström, "Non-visual haptic interaction design: Guidelines and applications," Ph.D. dissertation, Dept. Commun. Syst., Lund Univ., Lund, Sweden, 2001.

(Checked: crossref_rawtext)Y. Shimizu, S. Saida, and H. Shimura, "Tactile pattern recognition by graphic displays: Importance of 3-D information for haptic perception of familiar two-dimensional patterns," Perception & Psychophysics, vol. 53, no. 1, pp. 43–48, 1993.

M. Rotard, C. Knödler, and T. Ertl, "A tactile web browser for the visually disabled," in Proc. ACM Symp. Document Eng. (DocEng), New York, NY, USA, 2005, pp. 15–24.

M. D. C. Tobin, Tactile Pictures: Reflections on their Development and Use. Birmingham, U.K.: Univ. Birmingham, Research Centre for the Education of the Visually Handicapped, 1972.

(Checked: crossref_rawtext)American Printing House for the Blind, Guidelines and Standards for Tactile Graphics, Louisville, KY, USA: APH, 2010. [Online]. Available: https://www.aph.org/research/tactile-graphics-guidelines/

R. L. Klatzky and S. J. Lederman, "Haptic object perception: Spatial dimensionality and relation to vision," Philos. Trans. R. Soc. Lond. B Biol. Sci., vol. 366, no. 1581, pp. 3097–3105, Nov. 2011.

H. P. Palani and N. A. Giudice, "Principles of haptic access to graphical information: A review and classification," in Proc. 16th Int. ACM SIGACCESS Conf. Computers & Accessibility (ASSETS), New York, NY, USA, 2014, pp. 107–114.

S. Follmer, D. Leithinger, A. Olwal, A. Hogge, and H. Ishii, "inFORM: Dynamic physical affordances and constraints through shape and object actuation," in Proc. 26th ACM Symp. User Interface Software Technol. (UIST), New York, NY, USA, 2013, pp. 417–426.

N. A. Giudice, H. P. Palani, E. Brenner, and K. M. Kramer, "Learning non-visual graphical information using a touch-based vibro-audio interface," in Proc. 14th Int. ACM SIGACCESS Conf. Computers & Accessibility (ASSETS), New York, NY, USA, 2012, pp. 103–110.

L. Engel, S. M. Nikolaev, and A. Holz, "Exploring data through touch: Insights from tactile charts with screen reader support," ACM Trans. Access. Comput., vol. 16, no. 2, pp. 1–28, Jun. 2023.

(Checked: not_found)S. K. Kane, J. P. Bigham, and J. O. Wobbrock, "Slide rule: Making mobile touch screens accessible to blind people using multi-touch interaction techniques," in Proc. 10th Int. ACM SIGACCESS Conf. Computers & Accessibility (ASSETS), New York, NY, USA, 2008, pp. 73–80.

S. A. Wall and S. Brewster, "Sensory substitution using tactile pin arrays: Human factors, technology and applications," Signal Process., vol. 86, no. 12, pp. 3674–3695, Dec. 2006.

W. Bernsen, "Modality theory in support of multimodal interface design," in Proc. AAAI Spring Symp. Intelligent Multi-Media Multi-Modal Systems, Stanford, CA, USA, 1994, pp. 37–44.

(Checked: not_found)S. A. Brewster, F. Raty, and A. Kortekangas, "Enhancing scanning keyboards with non-speech sounds," in Proc. 1st Eur. Conf. Disability, Virtual Reality & Associated Technologies, Maidenhead, U.K., 1996, pp. 235–244.

H. P. Palani, A. C. Giudice, and N. A. Giudice, "Evaluation of non-visual zooming for exploration of tactile maps," in Proc. 17th Int. ACM SIGACCESS Conf. Computers & Accessibility (ASSETS), New York, NY, USA, 2015, pp. 3–10.

C. Candlin, "Touch and the limits of the professional eye," in Touch in Museums: Policy and Practice in Object Handling, L. Pye, Ed. Oxford, U.K.: Berg, 2007, pp. 9–24.

L. C. Chen, G. Papandreou, F. Schroff, and H. Adam, "Rethinking atrous convolution for semantic image segmentation," arXiv:1706.05587, Jun. 2017.

(Checked: crossref_rawtext)M. Rotard, S. Philipp-Foliguet, and T. Ertl, "High-quality rendering of digital pictures for a tactile display," in Proc. ACM SIGCHI Conf. Human Factors Comput. Syst. (CHI), New York, NY, USA, 2008, pp. 2131–2140.

(Checked: not_found)A. Bhattacharya, S. Zhang, and C. Bennett, "Towards automated generation of tactile graphics: Challenges and opportunities," in Proc. 22nd Int. ACM SIGACCESS Conf. Computers & Accessibility (ASSETS), New York, NY, USA, 2020, pp. 1–12.

(Checked: crossref_rawtext)T. Holloway, K. Marriott, and A. Butler, "Accessible SVG: Design, implementation and evaluation," in Proc. 22nd Int. World Wide Web Conf. (WWW), New York, NY, USA, 2013, pp. 609–618.

(Checked: not_found)M. Heller and E. Gentaz, Psychology of Touch and Blindness. New York, NY, USA: Psychology Press, 2014.

(Checked: crossref_rawtext)M. A. Heller, "Haptic dominance in form perception: Vision versus proprioception," Perception, vol. 21, no. 5, pp. 655–660, 1992.

J. Luk, J. Pasquero, S. Little, K. MacLean, V. Levesque, and V. Hayward, "A role for haptics in mobile interaction: Initial design using a handheld tactile display prototype," in Proc. ACM SIGCHI Conf. Human Factors Comput. Syst. (CHI), New York, NY, USA, 2006, pp. 171–180.

L.-C. Chen, Y. Zhu, G. Papandreou, F. Schroff, and H. Adam, "Encoder-decoder with atrous separable convolution for semantic image segmentation," in Proc. Eur. Conf. Comput. Vision (ECCV), Munich, Germany, Sept. 2018, pp. 833–851.

D. A. Norman, The Design of Everyday Things, Revised and Expanded ed. New York, NY, USA: Basic Books, 2013.

(Checked: not_found)J. Brooke, "SUS: A quick and dirty usability scale," in Usability Evaluation in Industry, P. W. Jordan, B. Thomas, B. A. Weerdmeester, and A. L. McClelland, Eds. London, U.K.: Taylor & Francis, 1996, pp. 189–194.

K. R. Scherer and A. Ceschi, "Lost luggage: A field study of emotion–antecedent appraisal," Motiv. Emot., vol. 21, no. 3, pp. 211–235, 1997.

J. R. Landis and G. G. Koch, "The measurement of observer agreement for categorical data," Biometrics, vol. 33, no. 1, pp. 159–174, Mar. 1977.

A. D. Baddeley, "Working memory," Science, vol. 255, no. 5044, pp. 556–559, Jan. 1992.

J. Sweller, "Cognitive load during problem solving: Effects on learning," Cognitive Sci., vol. 12, no. 2, pp. 257–285, Apr.–Jun. 1988.

M. Büchel, A. Cattaneo, and C. Neely, "Superior tactile acuity in the blind: A meta-analysis," Neuropsychologia, vol. 48, no. 7, pp. 1865–1873, Jun. 2010.

(Checked: not_found)S. A. Brewster and L. M. Brown, "Tactons: Structured tactile messages for non-visual information display," in Proc. 5th Australasian User Interface Conf. (AUIC), Dunedin, New Zealand, 2004, vol. 28, pp. 15–23.

(Checked: crossref_rawtext)Reviews

How to Cite This Review

Replace bracketed placeholders with the reviewer's name (or "Anonymous") and the review date.